Reversible computing is a model of computation in which time is reversible.

As far as anyone knows, the laws of physics are reversible: that is, you can run them in reverse and recover any earlier state of the universe. This means that from a physical perspective, information can never be destroyed, only shuffled around. A process is said to be physically reversible if it results in no increase in physical entropy; it is isentropic.

Reversible Logic

The first condition for any deterministic device to be reversible is that its input and output be uniquely retrievable from each other. This is called logical reversibility. If, in addition to being logically reversible, a device can actually run backwards then it is called physically reversible and the second law of thermodynamics guarantees that it dissipates no heat.

| CNOT | |||

|---|---|---|---|

| INPUT | OUTPUT | ||

| 0 | 0 | 0 | 0 |

| 0 | 1 | 0 | 1 |

| 1 | 0 | 1 | 1 |

| 1 | 1 | 1 | 0 |

For one input bit, there are two possible reversible gates. One of them is NOT. The other is the identity gate, which maps its input to the output unchanged. For two input bits, the only non-trivial gate is the controlled NOT gate, which XORs the first bit to the second bit and leaves the first bit unchanged.

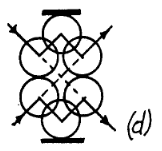

The Toffoli and Fredkin gates, are reversible and universal, which means that any logical or arithmetic operation can be constructed entirely from it. A Toffoli gate becomes a NAND gate by initializing its target bit to 1.

| INPUT | OUTPUT | ||||

|---|---|---|---|---|---|

| C | I1 | I2 | C | O1 | O2 |

| 0 | 0 | 0 | 0 | 0 | 0 |

| 0 | 0 | 1 | 0 | 0 | 1 |

| 0 | 1 | 0 | 0 | 1 | 0 |

| 0 | 1 | 1 | 0 | 1 | 1 |

| 1 | 0 | 0 | 1 | 0 | 0 |

| 1 | 0 | 1 | 1 | 0 | 1 |

| 1 | 1 | 0 | 1 | 1 | 1 |

| 1 | 1 | 1 | 1 | 1 | 0 |

In reversible computing, all operations must be reversible, and toggling a bit on or off would lose the information about the initial value of that bit. For this reason, there is no way to deterministically put bits in a specific prescribed state unless one is given access to bits whose original state is known in advance. Such bits, whose values are known a priori, are known as ancilla bits.

The common AND gate is not reversible, because the inputs 00, 01 and 10 are all mapped to the output 0. The original motivation for reversible logic gates was that reversible gates dissipate less heat.

Energy Consumption of Computation

Landauer's principle holds that with any logically irreversible manipulation of information, such as the erasure of a bit or the merging of two computation paths, must be accompanied by a corresponding entropy increase in non-information-bearing degrees of freedom of the observation apparatus.

| Self-Reversible | ||

|---|---|---|

| swp ( a b -- b a ) | ||

| sign ( a -- -a ) | ||

| not ( a -- ~a ) | ||

| cnot ( a b -- c d ) | ||

| Reversible | ||

| rot ( a b c -- b c a ) | -rot ( a b c -- c b a ) | |

| csr ( a b -- a~>b ) | csl ( a -- a<~b ) | |

| Reversible Arithmetic | ||

| add ( a b -- a b+a ) | sub ( a b -- a a-b ) | |

| mad ( a b c -- a+b*c c ) | dam ( a b -- a%b a/b b ) | |

Microprocessors which are reversible at the level of their fundamental logic gates can potentially emit radically less heat than irreversible processors, and someday that may make them more economical than irreversible processors. The Pendulum microprocessor is a logically reversible computer architecture that resembles a mix of PDP-8 and RISC.

The promise of reversible computing is that the amount of heat loss for reversible architectures would be minimal for significantly large numbers of transistors. Rather than creating entropy (and thus heat) through destructive operations, a reversible architecture conserves the energy by performing other operations that preserve the system state.

An erasure of information in a closed system is always accompanied by an increase in energy consumption.

Linear Logic

A linear system excludes combinators that have a duplicative effect, as well as those that a destructive effect. A linear logic computer language avoids the need for garbage collection by explicit construction and destruction of objects. In a reversible machine, garbage collection for recycling storage can always be performed by a reversed sub-computation. The "dangling reference problem" cannot happen in a linear language because the only name occurrence for an object is used to invoke its destructor, and the destructor doesn't return the object.

Most Forth operators take their operands from the top of the stack and return their values to the top of the stack. The language's absence of variable names is characteristic of combinators, the programming of Forth operators can therefore be seen as the construction of larger combinators from smaller ones. A Forth which incorporates only stack permutation operations like swap, rotate, and roll must be linear, because it has no copying or killing operators.~

For an example of reversible cellular automata, see Fractran and Qu-Ants.

- Computers that can run backward

- Software Simulator of Reversible Processor with Stack

- The Aleph Calculus

incoming: ternary fractions rejoice devlog fractran 2023 2022 malleable computing